The case of Weerahandi v. Shelesh is a classic example of how Section 230 (a provision of the Communications Decency Act (CDA), found at 47 USC 230) shielded online intermediaries from alleged tort liability occasioned by their users.

Background Facts

Plaintiff was a YouTuber and filed a pro se lawsuit for, among other things, defamation, against a number of other YouTubers as well as Google and YouTube. The allegations arose from a situation back in 2013 in which one of the individual defendants sent what plaintiff believed to be a “false and malicious” DMCA takedown notice to YouTube. One of the defendants later took the contact information plaintiff had to provide in the counter-notification and allegedly disseminated that information to others who were alleged to have published additional defamatory YouTube videos.

Google and YouTube also got named as defendants for “failure to remove the videos” and for not taking “corrective action”. These parties moved to dismiss the complaint, claiming immunity under Section 230. The court granted the motion to dismiss.

Section 230’s Protections

Section 230 provides, in pertinent part that “[n]o provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider.” 47 U.S.C. § 230(c)(1). Section 230 also provides that “[n]o cause of action may be brought and no liability may be imposed under any State or local law that is inconsistent with this section.” 47 U.S.C. § 230(e)(3).

The CDA also “proscribes liability in situations where an interactive service provider makes decisions ‘relating to the monitoring, screening, and deletion of content from its network.’ ” Obado v. Magedson, 612 Fed.Appx. 90, 94–95 (3d Cir. 2015). Courts have recognized Congress conferred broad immunity upon internet companies by enacting the CDA, because the breadth of the internet precludes such companies from policing content as traditional media have. See Jones v. Dirty World Entm’t Recordings LLC, 755 F.3d 398, 407 (6th Cir. 2014); Batzel v Smith, 333 F.3d 1018, 1026 (9th Cir. 2003); Zeran v. Am. Online, Inc., 129 F.3d 327, 330 (4th 1997); DiMeo v. Max, 433 F. Supp. 2d 523, 528 (E.D. Pa. 2006).

How Section 230 Applied Here

In this case, the court found that the CDA barred plaintiff’s claims against Google and YouTube. Both Google and YouTube were considered “interactive computer service[s].” Parker v. Google, Inc., 422 F. Supp. 2d 492, 551 (E.D. Pa. 2006). Plaintiff did not allege that Google or YouTube played any role in producing the allegedly defamatory content. Instead, Plaintiff alleged both websites failed to remove the defamatory content, despite his repeated requests.

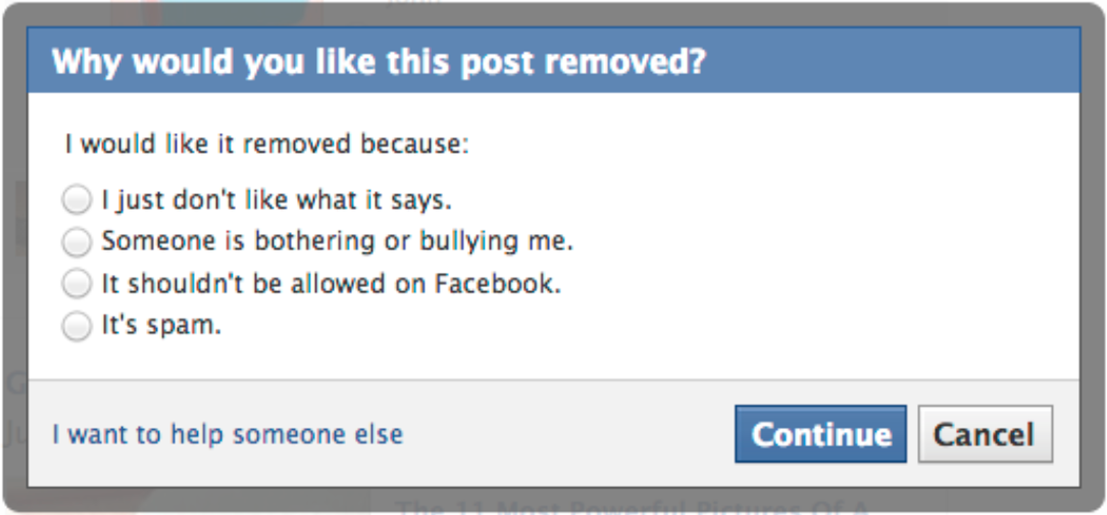

Plaintiff did not cite any authority in his opposition to Google and YouTube’s motion, and instead argued that the CDA did not bar claims for the “failure to remove the videos” or to “take corrective action.” The court held that to the contrary, the CDA expressly protected internet companies from such liability. Under the CDA, plaintiff could not assert a claim against Google or YouTube for decisions “relating to the monitoring, screening, and deletion of content from its network. ” Obado, 612 Fed.Appx. at 94–95 (3d Cir. 2015); 47 U.S.C. § 230(c)(1) (“No provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider.”). For these reasons, the court found the CDA barred plaintiff’s claims against Google and YouTube.

Weerahandi v. Shelesh, 2017 WL 4330365 (D.N.J. September 29, 2017)

![]() About the Author: Evan Brown is a Chicago technology and intellectual property attorney. Call Evan at (630) 362-7237, send email to ebrown [at] internetcases.com, or follow him on Twitter @internetcases. Read Evan’s other blog, UDRP Tracker, for information about domain name disputes.

About the Author: Evan Brown is a Chicago technology and intellectual property attorney. Call Evan at (630) 362-7237, send email to ebrown [at] internetcases.com, or follow him on Twitter @internetcases. Read Evan’s other blog, UDRP Tracker, for information about domain name disputes.